AI-Generated UGC: Disclosure Best Practices

When to label AI-generated UGC, how to disclose (labels, watermarks, metadata), platform rules, and best practices to stay compliant and build trust.

When to label AI-generated UGC, how to disclose (labels, watermarks, metadata), platform rules, and best practices to stay compliant and build trust.

4.98 /5 - from 58k reviews

Trusted by 50,000+ creators — get real engagement delivered to your profile in minutes, not days.

Ready to use Instagram's 'Broadcast Channels'? Our guide makes it easy to engage your followers. Explore the new feature now!

AI-generated content is everywhere in 2026, from AI-generated social media posts to hyper-realistic videos. But as this content becomes harder to distinguish from human-made creations, transparency is more important than ever. Here's what you need to know:

Transparency is no longer optional - it's a necessity to maintain trust and comply with regulations. By following these practices, you can stay compliant and build stronger connections with your audience.

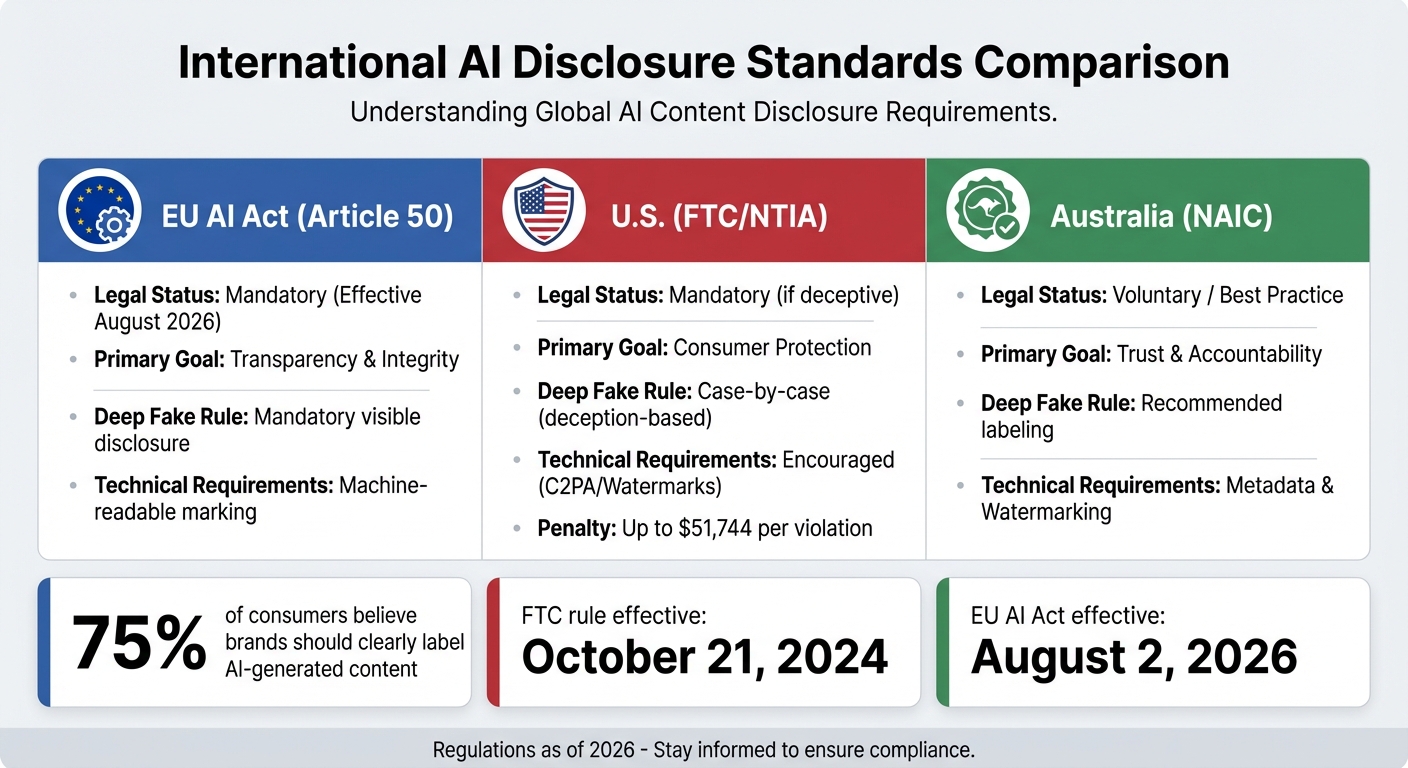

Global AI Disclosure Requirements Comparison: EU, US, and Australia

The rules surrounding AI disclosure have moved beyond suggestions to enforceable regulations with hefty fines. Staying informed about these regulations is critical for protecting your brand and ensuring compliance.

The FTC's Trade Regulation Rule on Consumer Reviews and Testimonials, effective October 21, 2024, sets strict boundaries on AI-generated content. Specifically, it bans fake reviews and testimonials created by AI that misrepresent someone's identity, experience, or even existence. Virtual influencers and AI-based endorsers now face the same disclosure requirements as human endorsers under the updated Endorsement Guides (16 CFR Part 255).

Violations under this rule can cost up to $51,744 per instance, signaling a shift toward stricter enforcement to maintain authenticity. To meet FTC standards, disclosures must be clear and conspicuous - easily visible to consumers without being buried in hashtags or hidden under "read more" links.

Attorney Nilesh (Neal) Patel explains, "The FTC's guidance makes it clear that the principles of authenticity and transparency apply equally to content generated by AI and humans".

While U.S. regulations emphasize preventing consumer deception, international standards often impose additional technical and reporting obligations.

Globally, disclosure requirements vary significantly. The EU AI Act takes a more rigid, rights-focused stance. Under Article 50, effective August 2, 2026, AI providers must label outputs with machine-readable formats and disclose deepfakes or AI-generated content on public-interest issues. A draft Code of Practice was introduced on December 17, 2025, with the finalized version expected by mid-2026.

In contrast, Australia has adopted a voluntary approach, promoting best practices like labeling, watermarking, and metadata to enhance consumer trust.

| Feature | EU AI Act (Article 50) | U.S. (FTC/NTIA) | Australia (NAIC) |

|---|---|---|---|

| Legal Status | Mandatory (Aug 2026) | Mandatory (if deceptive) | Voluntary / Best Practice |

| Primary Goal | Transparency & Integrity | Consumer Protection | Trust & Accountability |

| Deep Fake Rule | Mandatory visible disclosure | Case-by-case (deception) | Recommended labeling |

| Technical Req. | Machine-readable marking | Encouraged (C2PA/Watermarks) | Metadata & Watermarking |

These differences highlight contrasting philosophies. The U.S. prioritizes harm prevention through a deception-focused framework, while the EU enforces a prescriptive, rights-driven model. In the EU, developers must ensure technical markings for AI content, while brands and users are responsible for making disclosures visible to consumers. Both approaches allow exceptions for AI-generated content that has undergone human review and editorial oversight.

Balancing transparency with usability is key when disclosing AI-generated content. Most platforms provide tools to help ensure compliance while maintaining user trust.

Disclosing AI-generated content typically involves three main methods: visible labels, watermarking, and metadata recording.

Disclosure is particularly important for realistic content - like lifelike depictions of people or events that never occurred. However, minor edits (e.g., beauty filters or background noise removal) typically don’t require it. Failure to disclose repeatedly could lead to penalties, including content removal.

To ensure consistency, familiarize yourself with platform-specific disclosure strategies.

Each platform has its own approach to AI disclosure. Platforms like YouTube, TikTok, Instagram, and Facebook offer native toggles that apply standardized labels during uploads. For example, YouTube introduced its "Altered or Synthetic" policy in March 2024, requiring creators to use a toggle for realistic synthetic media. This displays an "Altered or synthetic content" banner beneath videos starting in 2025.

| Platform | Disclosure Method | Label Format | Applies To |

|---|---|---|---|

| YouTube | Upload Toggle | Banner below player or Shorts feed | Realistic altered/synthetic people, places, or events |

| TikTok | "AI-generated" Toggle | Badge beneath username | Realistic synthetic people, voices, or events |

| Meta (Instagram/Facebook) | C2PA Metadata / Manual | "AI Info" or "Made with AI" tag | Photorealistic AI images or C2PA-tagged content |

| Vimeo | Settings Toggle | Label under video title or player | Realistic scenes, altered events, or cloned voices |

Meta platforms (Instagram and Facebook) use systems that automatically detect C2PA metadata, applying tags like "AI Info" or "Made with AI." On TikTok, activating the toggle adds a badge beneath the username. YouTube’s research suggests these banners may slightly reduce click-through rates but significantly increase viewer trust.

However, false positives can be an issue. For instance, minor adjustments in Photoshop Beta might trigger an "AI Info" tag on Instagram, even if the content is mostly human-made. To prevent this, re-encode assets using tools like "Save for Web" or ExifTool to strip unnecessary metadata before uploading.

Incorporating these methods into your workflow can help streamline compliance.

To meet regulatory requirements and follow best practices, integrate AI disclosure into your production process:

Before launching a campaign, conduct QA tests by uploading assets to a staging or private profile. This helps verify which labels appear automatically. According to a Gartner survey, while 45% of CMOs use generative AI, fewer than half have formal policies for labeling AI-created content. Regular audits of campaign materials can ensure labels are applied accurately.

"We use AI tools to draft or assist in creating some content. All public-facing materials are reviewed by a human before publication. Where appropriate, we disclose AI involvement through in-line statements, metadata, or badges."

– Mokshious

Whenever possible, use the platform’s native disclosure toggle. It’s the most effective way to ensure labels appear in the correct format and location.

Transparency is the cornerstone of trust. While consumer data has shown this to be true, the key is ensuring that disclosure doesn’t diminish content engagement.

The level of disclosure should match the content’s purpose and the associated risks. For high-trust content - like expert advice, financial tips, or health-related information - inline disclosures near the top of the post with clear, specific language are best. On the other hand, for utility-based content, such as definitions or tools, a simple label or a sitewide policy page often suffices.

"Disclosure is a trust signal, not a ranking requirement. When disclosure helps readers understand how something was created, it builds confidence. When it feels defensive, performative, or unnecessary, it does the opposite." – Dipti Padalkar, Digital Marketing Professional

It’s important to clarify AI’s role in your content. Whether it’s been used for drafting, research, or image generation, transparency about human oversight reassures readers. YouTube’s research highlights this balance: while labels like "altered or synthetic" can slightly reduce click-through rates, they significantly boost trust among viewers aware of AI risks. This trade-off leans toward long-term credibility, which outweighs the loss of short-term clicks.

Sharing details about your AI workflow - such as the tools or prompts used - can also draw audiences in by offering a behind-the-scenes look at your creative process. Using neutral, straightforward language in disclosures helps maintain authority and accountability. Avoid listing "AI" as the author; keeping human creators visible reinforces trust and responsibility. This approach builds naturally on prior disclosure strategies.

Practical examples show how ethical AI disclosure can work effectively. Industry frameworks like the IAB's AI Transparency and Disclosure Framework, introduced in January 2026, promote targeted disclosure only when AI use could potentially mislead consumers. This approach prevents "disclosure fatigue" while maintaining ethical integrity.

For instance, marketers often include inline notes at the top of editorial content to clarify AI’s role. On social media, platforms like YouTube and TikTok offer native toggles - such as YouTube’s "Altered or Synthetic" label or TikTok’s "AI-generated" badge - that can be activated during uploads. For routine tasks like grammar corrections or background noise removal, a sitewide editorial policy page suffices instead of labeling every piece individually.

The impact is clear: 46% of consumers are less likely to engage with brands that use AI without transparency. However, brands that openly disclose their AI use are perceived as more trustworthy, especially in a digital world where audiences are increasingly skeptical.

"In a landscape where audiences increasingly question what's real, transparency becomes its own creative advantage." – Kalin Anastasov, Content Manager at Influencer Marketing Hub

As we’ve explored, ethical AI disclosure isn’t just about meeting regulations - it’s about building trust, a cornerstone of meaningful engagement. In today’s digital world, where skepticism runs high, being transparent about AI-generated content can set your brand apart. Research backs this up: 73% of Gen Z and Millennials say clear disclosure would either increase or not affect their likelihood to purchase. At the same time, consumers are twice as likely as advertisers to label brands using AI as "manipulative".

The key is to label content thoughtfully - only when AI significantly impacts authenticity or could mislead. This avoids overwhelming audiences with unnecessary disclosures while upholding ethical standards. Whether it’s inline notes for trusted content or platform-specific toggles for synthetic media, clarity is the priority - without over-explaining.

These principles provide a roadmap for navigating AI disclosure while maintaining trust and transparency.

For Instagram growth, ethical practices are more than just a nice-to-have - they’re essential for long-term success. UpGrow integrates these disclosure standards into its strategy, ensuring genuine engagement and sustainable growth. By combining AI-powered tools with real human expertise, UpGrow delivers organic growth that aligns with Instagram’s guidelines. This approach avoids the pitfalls of automated bots, which can lead to account suppression, and instead focuses on authentic interactions.

With 24/7 access to real-time analytics through a live dashboard, you can monitor performance and refine your strategy on the fly. Features like AI-powered targeting with user-set filters, location-based targeting, profile optimization, and access to a viral content library help you connect meaningfully with your audience. Starting at just $39/month and backed by a growth guarantee, UpGrow is designed for creators and brands who value transparency and authenticity in today’s AI-driven world.

Disclosure is essential when content is created or modified using AI. It helps ensure transparency, builds trust with your audience, and aligns with legal or platform-specific rules. For instance, California's AI Transparency Act requires clear disclosures for AI-generated content starting January 1, 2026. To stay compliant, always review relevant laws and guidelines.

To maintain audience trust and adhere to platform policies, it's best to clearly mark content as AI-generated or AI-modified. Use platform-specific tools like captions, overlays, or labels to make this distinction obvious. Being upfront about using AI tools not only reinforces ethical practices but also ensures compliance with disclosure guidelines.

To make sure human-created content isn’t confused with AI-generated material, it’s important to be upfront. Clearly indicate when AI tools have been part of the process - whether for writing or editing. Use visible labels, captions, or disclaimers to communicate this. Follow the rules and guidelines set by each platform, and keep an open line of communication about AI usage. This approach not only fosters trust but also helps avoid any misclassification, especially as more platforms now require AI labeling to ensure clarity.