AI UGC Disclosure: Why It Matters

How brands must disclose AI-generated UGC to avoid fines, platform penalties, and trust loss—and how to label content correctly.

How brands must disclose AI-generated UGC to avoid fines, platform penalties, and trust loss—and how to label content correctly.

4.98 /5 - from 58k reviews

Trusted by 50,000+ creators — get real engagement delivered to your profile in minutes, not days.

Ready to use Instagram's 'Broadcast Channels'? Our guide makes it easy to engage your followers. Explore the new feature now!

AI-generated user-generated content (UGC) is reshaping how brands interact with audiences, but it comes with major challenges around trust and compliance. Here’s why this matters:

Brands must clearly disclose AI involvement to avoid penalties, maintain credibility, and align with evolving laws like the EU AI Act (2026) and California’s AI Transparency Act (2026). Failure to comply risks fines, account suspensions, and loss of audience trust.

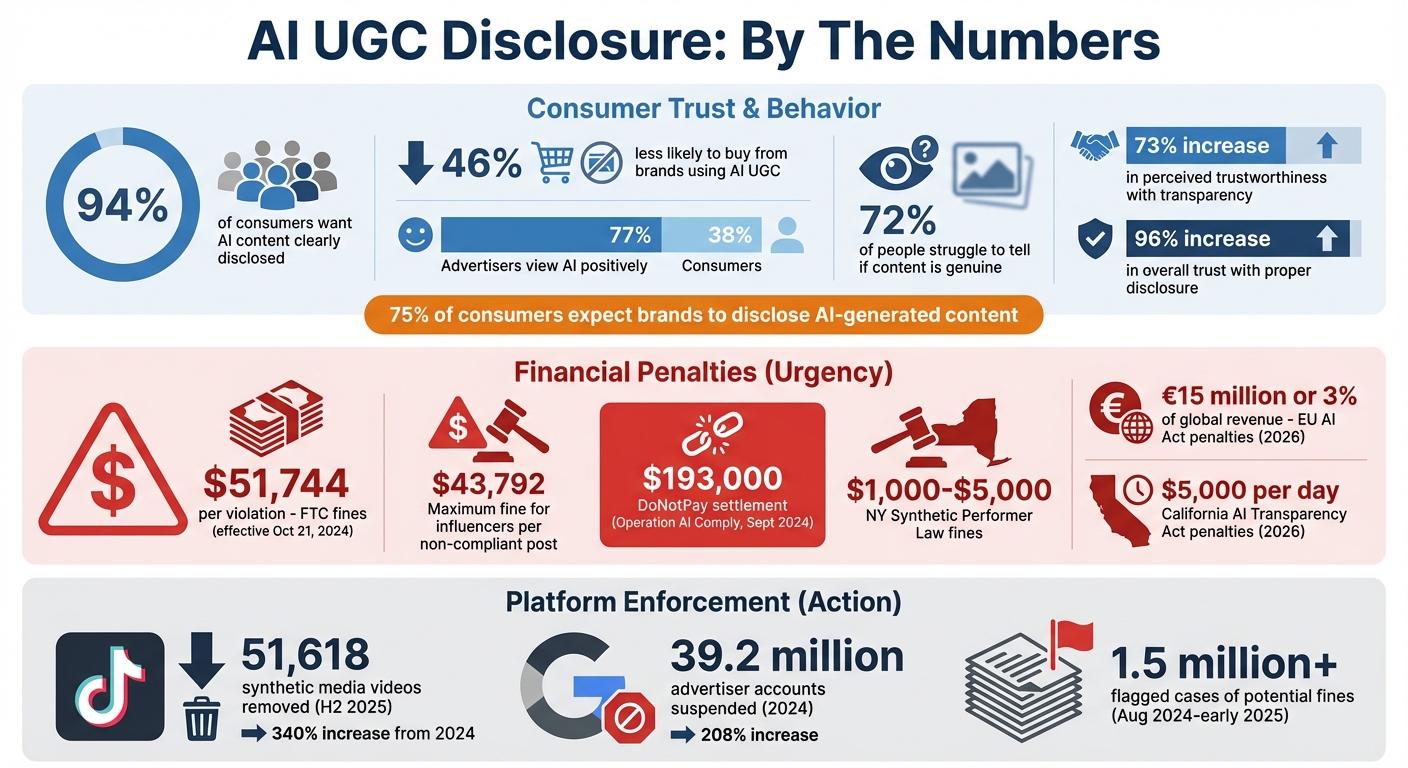

AI UGC Disclosure Statistics: Consumer Trust and Compliance Penalties

Failing to disclose AI-generated content can lead to damaged reputations, hefty fines, and even account suspensions. These risks extend beyond legal troubles - they erode audience trust and invite strict platform enforcement.

When audiences discover AI-generated content that isn’t disclosed, they often feel misled. This disconnect between what’s expected and what’s real creates a significant trust gap.

The numbers paint a stark picture. While 77% of advertisers view AI in a positive light, only 38% of consumers feel the same. Even worse, 72% of people struggle to tell if content is genuine. Studies show that consumers who feel deceived are more likely to boycott brands, which can hurt long-term reputations and reduce ad revenue. On the flip side, companies that are upfront about AI use can increase perceived trustworthiness by 73% and overall trust by 96%.

The issue is clear: AI lacks human experiences. Statements like “I love this coffee” from an AI persona are inherently misleading because no AI has ever tasted coffee.

"Transparency will be vital for brands to maintain long-term consumer relationships and generate positive brand equity" – Elizabeth Herbst-Brady, Chief Revenue Officer at Yahoo

This trust deficit often sets the stage for legal and regulatory actions.

The Federal Trade Commission (FTC) has zero tolerance for deceptive AI practices. FTC Chair Lina Khan emphasized:

"Using AI tools to trick, mislead, or defraud people is illegal. There is no AI exemption from the laws on the books"

The FTC focuses on deception, not the technology itself. If AI-generated content implies personal experiences that don’t exist, it’s considered misleading.

The financial stakes are steep. Under the FTC’s rule on fake and AI-generated reviews, effective October 21, 2024, civil penalties can hit $51,744 per violation. For influencers, a single non-compliant post can result in fines up to $43,792. Brands are held fully accountable for their AI-generated content. For instance, in September 2024, the FTC’s “Operation AI Comply” led to a $193,000 settlement against DoNotPay for unverified claims about its “robot lawyer” AI. From August 2024 to early 2025, compliance systems flagged over 1.5 million cases of potential fines related to AI-generated user content.

State and international laws add another layer of complexity. New York’s Synthetic Performer Law imposes fines starting at $1,000, escalating to $5,000 for repeated violations. The EU AI Act, set to take effect in August 2026, requires clear disclosure of deepfakes, with penalties reaching €15 million or 3% of a company’s global annual revenue. As Kaeya Majmundar, Co-Founder and CEO of Disclosure Facts, puts it:

"Technology does not excuse deception. Innovation does not override disclosure"

Beyond fines, non-compliance can attract strict action from digital platforms.

Social media platforms are cracking down on undisclosed AI content using both automated detection and manual reporting. Penalties range from content removal to permanent bans.

TikTok, for example, enforces a strict strike system. In the second half of 2025, TikTok removed 51,618 synthetic media videos - a 340% increase from 2024. Earlier in 2024, the platform took down deepfake videos featuring Tom Hanks and MrBeast for violating AI disclosure and impersonation policies.

YouTube follows a warning-based system. Creators who fail to disclose AI content by checking the “Altered or synthetic content” box during upload may face escalating penalties, starting with a 7-day warning and leading to demonetization or account suspension.

Meta platforms like Instagram and Facebook focus on ad rejections and account-level penalties. For ads created with Meta’s AI tools, the platform may automatically apply “AI info” labels. Meanwhile, Google suspended 39.2 million advertiser accounts in 2024 - a 208% jump, largely due to AI-generated impersonation and policy breaches.

Beyond these direct penalties, undisclosed AI content often sees reduced reach and engagement as platforms refine their detection algorithms to prioritize transparency. Combined, these risks - from legal fines to diminished digital visibility - highlight the growing cost of non-compliance. The message is clear: enforcement is intensifying, and the stakes are only getting higher.

Transparency in AI-generated content is no longer optional - it’s a legal requirement. With strict rules and hefty fines in place, understanding these regulations is crucial for avoiding penalties and ensuring compliance.

As of October 21, 2024, the FTC's Rule on the Use of Consumer Reviews and Testimonials (16 CFR Part 465) sets clear expectations for labeling AI-generated content. Under this rule, using AI to create reviews or testimonials that misrepresent authentic consumer experiences is strictly forbidden. For instance, AI cannot be used to fabricate reviews or impersonate real customers' experiences with a product. Additionally, virtual influencers are prohibited from making personal claims like "I love this coffee!" because, as non-human entities, they lack the sensory capacity to form genuine opinions.

The FTC also enforces a high standard for disclosure. Disclosures must be "unavoidable", meaning they cannot require users to click, hover, or take any extra steps to access the information. For compliance, brands should ensure disclosures are placed at the start of text posts or prominently displayed in videos.

Businesses leveraging AI to summarize reviews face further scrutiny. Summaries must fairly represent both positive and negative feedback, ensuring that unfavorable opinions are not suppressed. For US companies operating internationally, this is just the beginning of a complex regulatory landscape.

US marketers targeting audiences abroad must navigate additional rules. The EU AI Act, set to take effect in August 2026, requires AI-generated content to include clear, machine-readable metadata. Article 50 specifically mandates disclosure for deepfakes.

In the US, California’s AI Transparency Act, effective January 1, 2026, introduces additional obligations. This law applies to providers with over 1,000,000 monthly users and requires both visible and metadata-embedded watermarking for AI content. Non-compliance can result in civil penalties of $5,000 per day.

| Regulation | Jurisdiction | Key Requirement | Effective Date | Penalty |

|---|---|---|---|---|

| FTC Final Rule | United States | Ban on fake AI reviews/testimonials | Oct 21, 2024 | Up to $51,744 per violation |

| CA AI Transparency Act | California, US | AI watermarking and detection tools | Jan 1, 2026 | $5,000 per day |

| EU AI Act (Art. 50) | European Union | Machine-readable AI content marking | August 2026 | – |

Major platforms like Meta, YouTube, and TikTok are already adopting the Coalition for Content Provenance and Authenticity (C2PA) standard, which embeds verifiable metadata in digital files. For US marketers, this means aligning with these standards to maintain global reach. Ensuring accurate "metadata hygiene" is now essential - both to embed correct AI provenance data and to avoid mislabeling authentic content as AI-generated. Brands can also use AI-powered Instagram tools to streamline content creation while maintaining these disclosure standards.

Getting disclosure right is simpler than you might think. A clear, consistent approach keeps you compliant, builds trust, and ensures your content strategy stays on track.

Clarity is key. Use straightforward terms like "AI-generated", "Created with AI," or "Artificial intelligence was used in this content" to make it obvious that AI played a role. Place these labels prominently at the beginning of captions or posts - don’t tuck them away where they might be missed.

For visual content, consider adding an "AI-generated" watermark directly on images or video frames. This ensures transparency even for viewers who skim past captions. Tools like Truepic can help you add these watermarks seamlessly.

If you’re using third-party apps like Canva or Runway to edit content and platform-specific tools aren’t available, include hashtags like #AIgenerated or #MadeWithAI in the first line of your caption. This simple step can help avoid policy violations.

"Labeling AI-generated content isn't just about ethics; it's good business. It future-proofs your brand, signals intentionality, and gives customers a reason to trust what they read." - Mokshious

Next, take advantage of the tools provided by the platforms themselves to ensure your disclosures are consistent.

Most major platforms now offer built-in tools to label AI-generated content, and using them is a must. For example, YouTube requires creators to enable the "altered content" toggle in YouTube Studio when uploading synthetic media. TikTok includes an "AI-generated content" toggle in the "More options" menu before posting. Instagram and Facebook rely on C2PA metadata to automatically tag content with labels like "AI Info" or "Made with AI".

Here’s a quick look at how some platforms handle disclosure:

| Platform | Primary Disclosure Tool | Label Location | Automatic Detection? |

|---|---|---|---|

| YouTube | "Altered content" toggle in Studio | Expanded description or video player | Yes, for YouTube AI tools and deepfakes |

| C2PA Metadata / Branded Content tags | Beneath username or in post info menu | Yes, via C2PA metadata | |

| TikTok | "AI-generated content" toggle | Beneath username | Yes, via C2PA and TikTok AI effects |

| Vimeo | Manual setting in video manager | Under video title and in embedded player | Yes, for Vimeo's AI tools |

Always activate these toggles during the upload process rather than relying solely on captions. Many platforms, like Meta and TikTok, now use AI to detect and label AI-generated content automatically when tools like DALL-E or Midjourney have been used.

One thing to watch out for is metadata hygiene. If you’ve used AI tools for small edits but the final content is mostly human-made, outdated metadata could trigger "false positive" labels. Tools like Adobe’s "Save for Web" or ExifTool can help clean metadata to avoid this.

Mixing AI and human input can add credibility to your content. Use AI for brainstorming or drafting, but have a human review and refine the final output to ensure it aligns with your brand voice. This approach not only improves content quality but also simplifies disclosure.

For content that combines AI and human efforts, be upfront. A statement like "This article was drafted using generative AI and reviewed by our editorial team" helps your audience understand the process. This kind of transparency fosters trust and shows intentionality.

Track how AI is used - whether for voice cloning, image generation, or creating composites - and include clear AI disclosure guidelines in creator briefs, Statements of Work (SOWs), and QA checklists. This ensures that influencers and third-party contributors meet platform rules.

It’s also important to note that disclosure is required for "realistic" synthetic media - anything depicting real people doing or saying things they didn’t, altered footage of events, or lifelike scenarios that never happened. However, minor edits like color correction or background blurring generally don’t require a label.

Early data from YouTube suggests that while "altered or synthetic" banners might slightly lower click-through rates (CTR), they significantly boost trust metrics among viewers. This balance between transparency and quality strengthens audience confidence while keeping your content compliant.

Navigating the challenges of undisclosed AI-generated content requires a thoughtful approach, and UpGrow offers solutions that align efficiency with transparency. In 2026, maintaining an ethical and engaging Instagram presence is all about balancing AI-driven tools with clear disclosures. UpGrow's platform is designed to help creators grow authentically while staying compliant with both regulations and platform guidelines.

UpGrow uses advanced AI-targeting technology to connect you with real followers. By applying filters like location, age, gender, and language preferences, the platform ensures your AI-assisted content reaches the right audience. This combination of patented tools and expert insights helps you build a following that truly aligns with your brand.

Transparency in performance metrics is essential to back up the authenticity promised in your AI disclosures. UpGrow's live dashboard tracks key metrics - like saves, shares, and comment depth - giving you a deeper understanding of how your content is performing. This goes beyond surface-level likes and helps identify potential issues, such as content being flagged as spam or suppressed by algorithms.

Interestingly, while AI labels might slightly reduce click-through rates, they significantly enhance viewer trust. The platform also helps address false positive AI labels caused by lingering metadata, thanks to tools designed for quick metadata cleanup.

UpGrow's viral content library streamlines content creation while maintaining human oversight, which is essential for building trust. Features like optimizing your Instagram profile and the Boost™ tool work together to amplify content that resonates with your audience, ensuring your growth remains organic and compliant with Instagram's policies. With 24/7 growth support and live chat, UpGrow provides the consistency needed to foster long-term credibility without sacrificing transparency or authenticity.

As Efe Onsoy, CEO of MoreThanPanel, aptly states:

"Ethical AI has become a competitive advantage, rather than a choice".

This article highlights how ethical AI disclosure is more than just a regulatory requirement - it’s a way to strengthen brand trust. Being upfront about AI-generated content lays the groundwork for lasting relationships with your audience. With 75% of consumers now expecting brands to disclose when they use AI-generated content, transparency has shifted from being optional to absolutely necessary. Marketers who adopt clear labeling practices today are setting themselves apart in a world where trust fuels both engagement and loyalty.

The risks are real, as discussed earlier, from legal concerns to platform-specific penalties. These underscore why taking a proactive approach to disclosure is critical. But it’s not just about avoiding trouble - transparent practices can actually enhance your brand. For example, YouTube research shows that AI labels can meaningfully improve trust among viewers.

As Boluwatife Ola-Ajayi from DMi Digital Marketing aptly puts it:

"In an era where authenticity drives engagement and loyalty, disclosure reinforces credibility rather than diminishing it".

Taking action is simple: use clear labels like "AI-generated" or "Created with AI", place them in visible areas - such as at the start of captions or as on-screen notes - and make use of platform disclosure tools when uploading content.

AI-generated user-generated content (UGC) refers to text, images, videos, audio, or code created or significantly shaped by artificial intelligence tools, often with minimal human involvement. Being upfront about AI-generated UGC is crucial for transparency, aligning with changing regulations, and fostering trust. It helps ensure audiences are aware when content has been influenced by AI.

To ensure AI disclosures are impossible to miss, position them right at the beginning of your content. Use methods like captions, overlays, opening statements, or platform-specific prompts to grab attention immediately. The key is to make sure viewers encounter the disclosure before diving into the content - ideally within the first few seconds or lines. For platforms like YouTube, take advantage of built-in tools, such as disclosure toggles during uploads, to stay aligned with their rules.

Minor edits made by AI usually don’t need an “AI-generated” label unless they bring about major changes to the content or are part of a more extensive AI-created piece. The focus of disclosure rules is on ensuring transparency when AI plays a significant role.